Sitemaps are an ingredient that completes a website’s SEO package. They are certainly still relevant, since they ensure content is not overlooked by web crawlers and reduce the resource burden on search engines. Sitemaps are a way to “spoon feed” search engines your content to ensure better crawling. Let’s look at how this is done.

XML Format

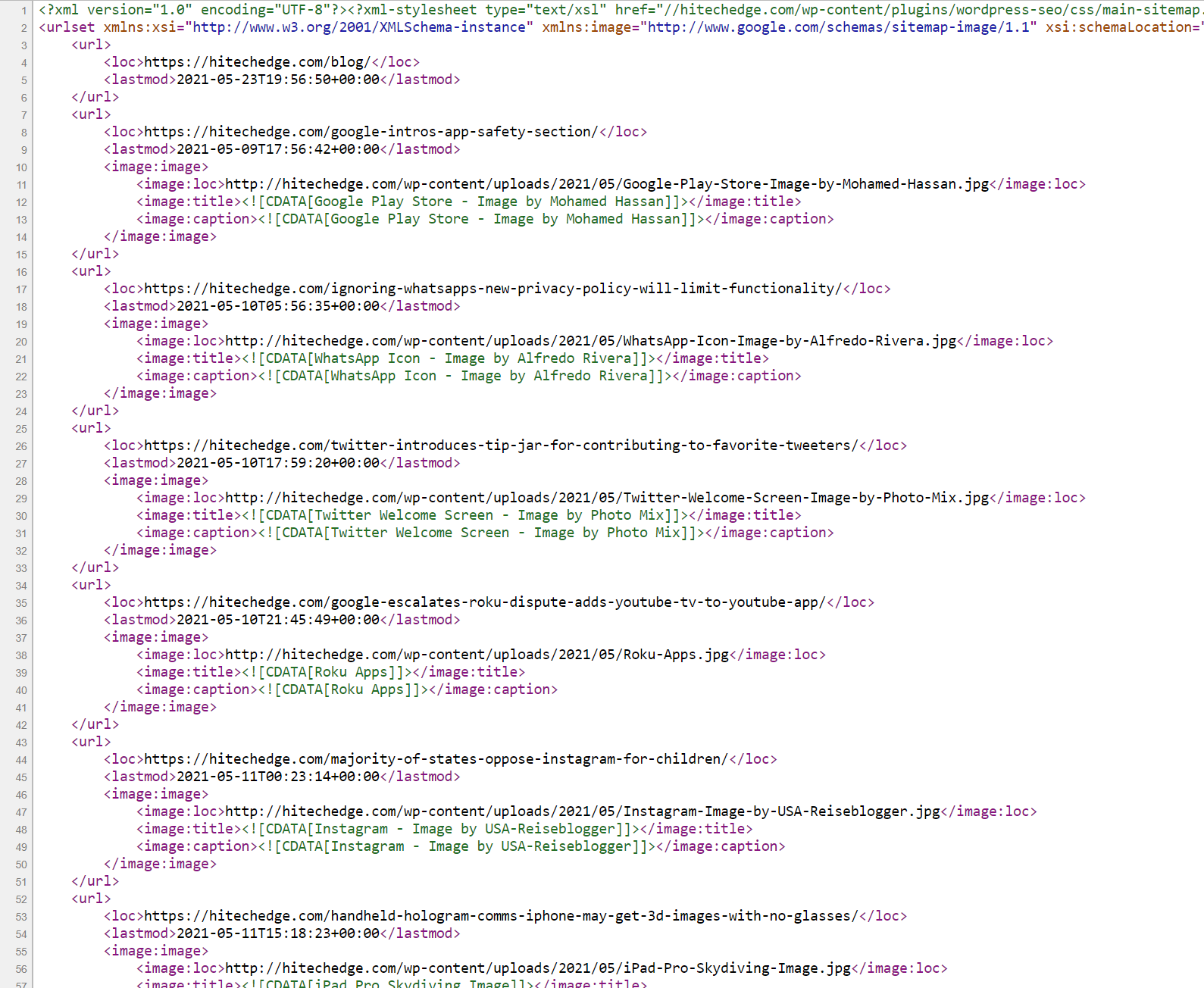

The sitemap file is what search engines look for. The elements available to an XML sitemap are defined by the sitemap protocol and include urlset, url, loc, lastmod, changefreq, and priority. An example DOM looks like:

http://example.com/

2006-11-18

daily

0.8

Sitemaps have a 10 MB size limit and cannot have more than 50,000 links, but you can use more than one file for the sitemap. A sitemap that consists of multiple files is called a sitemap index. Sitemap index files have a similar, but different format:

http://www.example.com/sitemap1.xml.gz 2004-10-01T18:23:17+00:00 http://www.example.com/sitemap2.xml.gz 2005-01-01

There are all kinds of sitemaps, ones for web pages, ones tailored to sites with videos and other media, mobile, geo data, and more. As long as it is within the cost-benefit for achieving better SEO, take the time to become familiar with the different types of sitemaps and make one that best fits your website’s architecture.

Location

Sitemaps can be named anything, but convention is that a sitemap will be named ‘sitemap.xml’ and is placed in the root of the site, so http://example.com/sitemap.xml. If multiple files are needed they can be named ‘sitemap1.xml’ and ‘sitemap2.xml’. Sitemap files can also be compressed, such as ‘sitemap.gz’. One can also have sitemaps in sub directories or submit them for multiple domains, but the cases for needing such are very limited.

Submission

Sitemaps are recognized by search engines in three ways:

• Robots.txt

• Ping request

• Submission interface

First, sitemaps can be specified in the robots.txt as follows:

Sitemap: http://example.com/sitemap.xml

The robots.txt file is then placed in the root of the domain, http://example.com/robots.txt, and when crawlers read the file they will find the sitemap and use it to improve their understanding of the website’s layout.

Second, search engines can be notified through “ping” requests, such as:

http://searchengine.com/ping?sitemap=http%3A%2F%2Fwww.yoursite.com%2Fsitemap.xml

These “ping” requests are a standard way search engines allow websites to notify them of updated content. Obviously, the domain (i.e. “searchengine.com”) will be replaced with say “google.com”.

Lastly, every major search engine has a submission tool for notifying the engine that a website’s sitemap has changed. Here are four major search engines and their submission URLs:

Google – http://www.google.com/webmasters/tools/ping?sitemap=

Yahoo! – http://search.yahooapis.com/SiteExplorerService/V1/updateNotification?appid=SitemapWriter&url=

Ask.com – http://submissions.ask.com/ping?sitemap=

Bing – http://www.bing.com/webmaster/ping.aspx?siteMap=

The ping requests do not respond with any information besides whether or not the request was received. The submission URLs will respond with information about the sitemap, such as any errors it found.

If your website uses WordPress or the like, there are great plugins such as Google XML Sitemaps which will do all this heavy work for you: creating sitemaps and notifying search engines including Google, Bing, Yahoo, and Ask. There are also tools for creating sitemaps such as the XML-Sitemaps.com tool or Google’s Webmaster Tools.

As we’ve said before, making sitemaps “shouldn’t take precedence over good internal linking, inbound link acquisition, a proper title structure, or content that makes your site a resource and not just a list of pages.” However, taking just a little bit of time with a good tool will help you complete your SEO package with a sitemap. Take this tutorial and make your site known!

Leave a Reply