Facebook is stepping up its fight against misinformation, including taking action against people who repeatedly share it.

Social media platforms have become one of the biggest conduits of misinformation about climate change, vaccinations, elections, social issues and more. Facebook has been taking an increasingly tougher stance against misinformation, adding fact checkers, warning labels and other measures.

The company is now taking action against individuals who repeatedly share misinformation. One part of the company’s strategy is to share more context about pages that spread it.

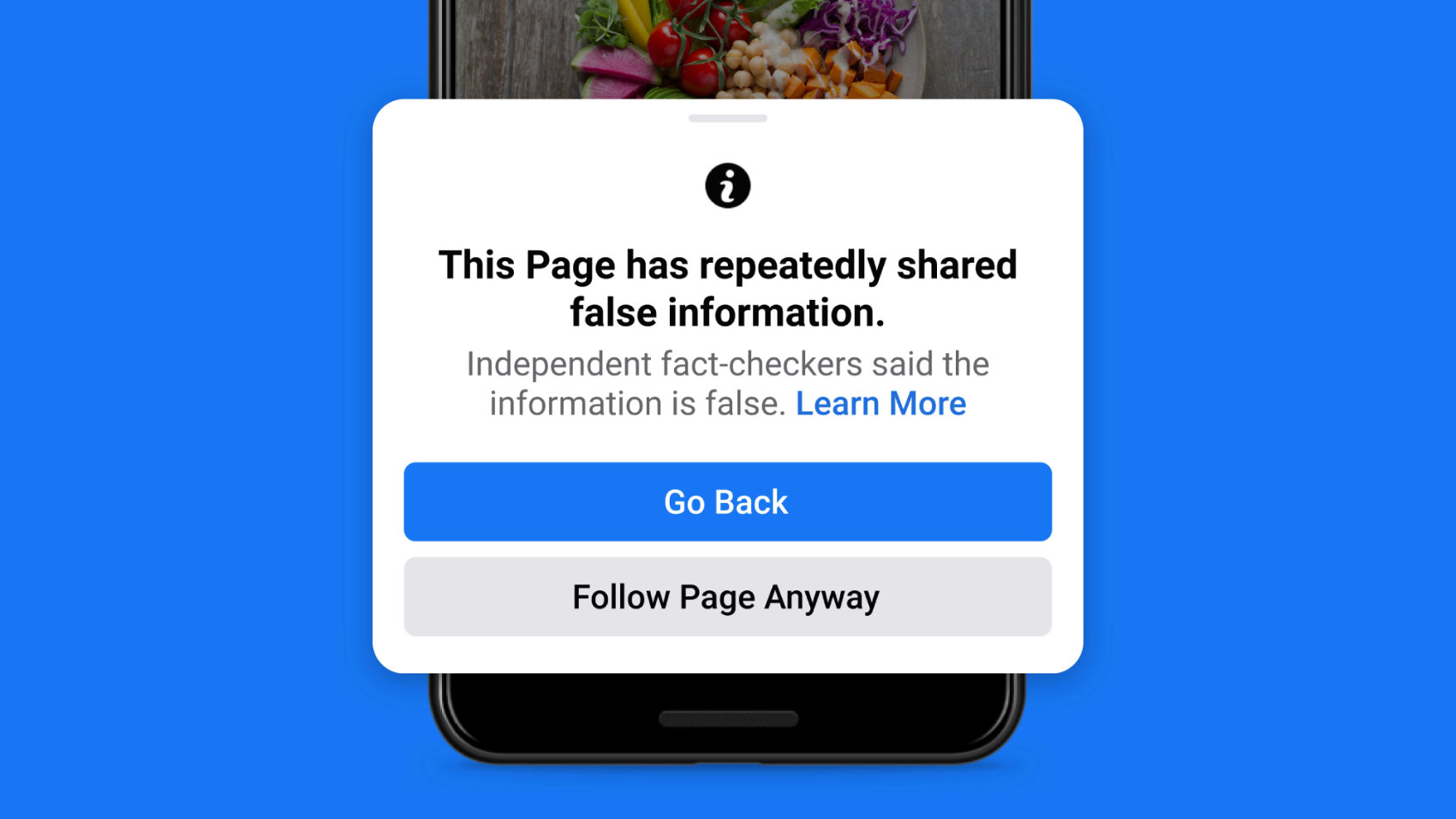

“We want to give people more information before they like a Page that has repeatedly shared content that fact-checkers have rated, so you’ll see a pop up if you go to like one of these Pages,” says the company’s blog. “You can also click to learn more, including that fact-checkers said some posts shared by this Page include false information and a link to more information about our fact-checking program. This will help people make an informed decision about whether they want to follow the Page.

Individuals who continue to share information may face additional penalties. Facebook previously would reduce a single post’s distribution in News Feed if it contained misinformation. Starting today, however, Facebook will reduce the distribution of all of an individual’s posts, if they repeatedly share misinformation.

The company is also improving the notifications a person receives when they attempt to share misinformation, including the fact-checking article that debunks the post.

It remains to be seen if the changes will have the desired effect. One thing is certain, however: Facebook has declared war on misinformation and the people who share it.

It’s likely this will be a non-issue soon based on news about Facebook’s AI development.

Leave a Reply